It’s 2:47 AM on a Tuesday, and you’re about to learn why crash resilience engineering matters more than any other technical investment you’ll make this year.

Your phone is blowing up. The on-call engineer just paged you. The release you pushed six hours ago — the one that passed all the tests, got through code review, and deployed without a single error — is now causing a cascade of crashes that’s taking down production for 40,000 users.

You scramble into a Slack war room. Someone shares a stack trace. Someone else shares a different stack trace. Nobody agrees on what’s actually broken. The CEO is asking for updates. Your customer success team is fielding furious emails. And your best senior engineer is staring at a monitoring dashboard muttering “this doesn’t make sense.”

Sound familiar?

Here’s the uncomfortable truth: this scenario isn’t bad luck. It’s a predictable outcome of treating crashes as random events rather than structured, classifiable failure modes. The difference between teams that spend every Tuesday at 2:47 AM in a war room and teams that sleep soundly isn’t talent or tooling — it’s philosophy.

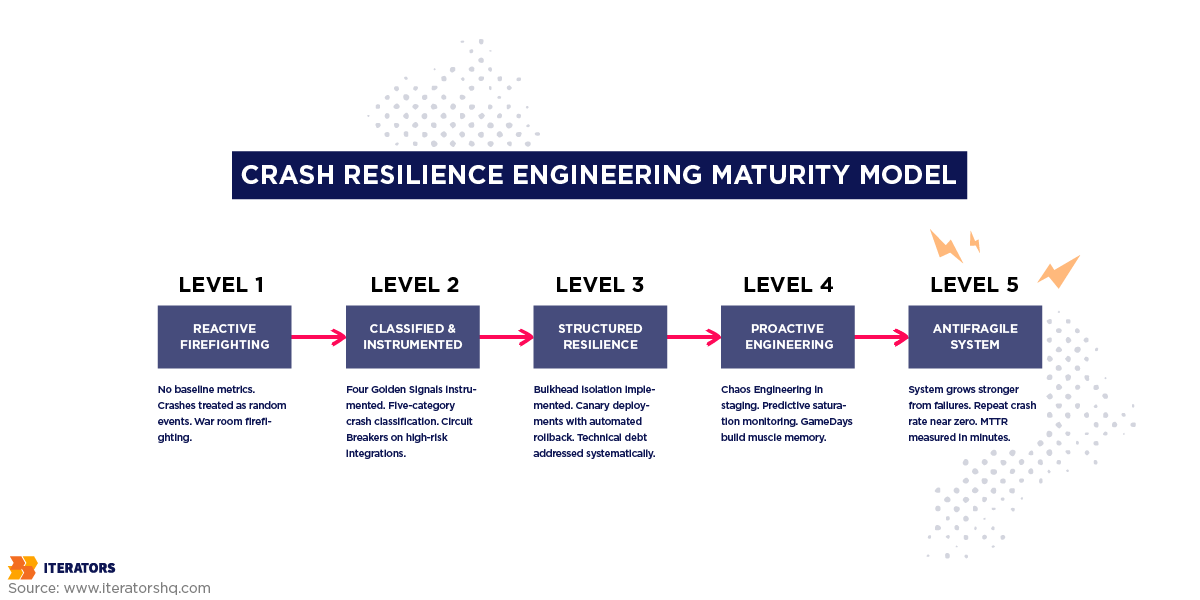

Crash resilience engineering is the discipline of moving from reactive firefighting to proactive architectural mastery. It’s about recognizing that crashes cluster around predictable events, classifying them into actionable categories, building structural defenses before failures arrive, and using telemetry data to make your system fundamentally harder to break — not just slightly less broken.

This guide covers everything: why crashes aren’t random, how to classify them, which design patterns actually prevent cascading failures, what your crash telemetry should be telling you, and how to build a culture that turns every outage into an architectural improvement rather than a blame session.

If your team is drowning in crash reports, reacting to the same categories of failures release after release, or struggling to translate raw stack traces into strategic decisions — this is for you.

Free Consultation

Stop Waking Up to

2:47 AM War Rooms

Our senior engineers will map your system’s real failure modes, identify your highest blast-radius risks, and build a crash resilience roadmap — turning reactive firefighting into predictable, contained failure management.

Schedule Your Resilience Consultation →The Hidden Patterns: Why Crashes Aren’t Random

Let’s start with the most important mental model shift in crash resilience engineering.

Crashes feel random. They arrive without warning. They don’t respect business hours. They seem to materialize from nowhere and then — after hours of frantic debugging — turn out to be caused by something absurdly mundane, like a configuration value that changed in an environment variable nobody documented.

But they’re not random. They cluster. And they cluster around three predictable triggers.

The Three Crash Triggers

Releases. The most common crash trigger is also the most obvious one that teams consistently underestimate. Every deployment is a change event. Change events introduce risk. The more frequently you deploy without proper observability, the more often you’ll experience release-correlated crash spikes. The CrowdStrike incident of July 2024 — which disabled 8.5 million Windows systems globally and caused over $10 billion in financial losses — was triggered by a single faulty configuration update pushed to the Falcon Sensor. One change. Global blast radius.

Infrastructure modifications. Database migrations, cloud provider configuration changes, network topology updates, Kubernetes cluster upgrades — these are all change events with delayed failure signatures. Unlike release crashes that surface within minutes, infrastructure change failures often manifest hours or days later, making root cause analysis significantly harder. A DNS record change that causes intermittent connection timeouts under load might not produce visible crash spikes until traffic reaches a certain threshold.

User growth spikes. Traffic spikes expose architectural assumptions that held true at lower scale. A database connection pool sized for 500 concurrent users will catastrophically exhaust when 5,000 users hit the system simultaneously. A memory allocation pattern that worked fine at baseline suddenly triggers garbage collection storms. Resource exhaustion failures are particularly insidious because they often produce symptoms — timeouts, 503 errors, hanging requests — that look identical to several other failure categories, making them difficult to diagnose quickly.

The common denominator across all three triggers is change. Systems crash when their environment changes in ways their architecture wasn’t designed to handle.

The Blast Radius Concept

Before we get into classification frameworks, you need to internalize one concept: blast radius.

Blast radius describes how far a failure propagates through your system before it’s contained. A localized failure with a small blast radius affects one service and stops there. A failure with a large blast radius cascades through dependency chains, consuming resources in upstream services, triggering retry storms, and eventually taking down systems that had nothing to do with the original fault.

The July 2024 CrowdStrike incident had an essentially unlimited blast radius because the failure occurred at the operating system level, below all application-layer defenses. Most production crashes don’t start there — they start in application logic, data handling, or infrastructure — but without proper architectural containment, they can achieve similarly catastrophic propagation.

Understanding blast radius is what separates engineers who think about individual bugs from engineers who think about system resilience. The question isn’t just “what broke?” — it’s “how far did it spread, and why?”

Hidden Dependencies and the Assumption Problem

Systems have dependencies that nobody wrote down. An authentication service that was always fast enough that nobody implemented a timeout. A third-party analytics provider that was treated as non-critical but somehow ended up blocking the main request path. A shared database connection pool that three different services are competing for without anyone realizing it.

These hidden dependencies are where crash resilience engineering does its most important work. The discipline demands that you map your system’s actual dependency graph — not the one in the architecture diagram that was last updated two years ago, but the real one, discovered through distributed tracing and production telemetry — and then systematically evaluate what happens when each dependency fails.

The Google SRE Book frames this as monitoring for symptoms rather than causes. In a complex distributed system, the cause of a failure is often several layers removed from the symptom your users experience. Monitoring for “database query latency” is monitoring for a cause. Monitoring for “user-facing request success rate” is monitoring for a symptom. The Four Golden Signals — Latency, Traffic, Errors, and Saturation — are all symptom-oriented metrics. They tell you when users are experiencing problems, which is what you actually care about.

Crash Resilience Engineering Classification: From Stack Traces to Strategy

Here’s the problem with most crash management workflows: engineers triage individual stack traces rather than patterns.

An alert fires. Someone looks at the stack trace. They find the line that threw the exception. They fix that line. They deploy. Another alert fires. Different stack trace. Different line. Same root cause.

The fix-the-line approach treats every crash as a unique, isolated event. Crash resilience engineering treats crashes as signals about systemic architectural weaknesses. To do that, you need a classification framework that maps symptoms to categories and categories to structural remediation strategies.

The Five Crash Categories in Crash Resilience Engineering

Infrastructure Failures

These are hardware degradation events, network partitioning, DNS resolution timeouts, and cloud provider zone outages. They’re characterized by widespread connection drops across multiple dependent services simultaneously.

Infrastructure failures typically have the largest blast radius of any crash category. When your application loses connectivity to its data layer, everything stops. The good news: infrastructure failures are also the most predictable category, and the one for which the most mature resilience patterns exist. Multi-region failovers, active-active redundancy, and fail-open routing protocols are all well-understood solutions.

The AWS Builders Library highlights a particularly nasty infrastructure failure pattern: the “black hole” effect. When a server becomes unhealthy, it often starts returning explicit error pages very rapidly — much faster than a healthy server processing complex logic. Load balancers using “least requests” routing will then funnel massive amounts of traffic to the broken, fast-responding server. The result: a single unhealthy node effectively paralyzes the entire cluster.

AWS’s recommendation is fail-open behavior: automated systems should stop routing traffic to a single bad node, but if the entire fleet appears to be failing simultaneously, they should allow traffic to flow rather than triggering a false positive that takes down the whole application.

Logic Failures

Application-level defects: unhandled exceptions, null pointer references, algorithmic infinite loops, and unvalidated state transitions. These are the crashes that most developers instinctively think of when they hear “crash” — the ones that produce clean stack traces pointing to specific lines of code.

Logic failures are typically release-correlated. They surface in the minutes following a deployment. They have moderate blast radius, generally isolated to the specific microservice executing the flawed code — unless upstream services lack proper timeout policies, in which case the blast radius expands significantly.

The key insight for logic failure resilience is that not all logic failures are created equal. A null pointer exception in a non-critical recommendation engine has a completely different business impact than a null pointer exception in the payment processing flow. Classification must include business impact assessment, not just technical severity.

Data and Semantic Failures

Schema evolution mismatches, corrupted payload deserialization, database deadlocks, and predictive data quality anomalies. These are the sneaky ones — they often produce silent corruption rather than hard crashes, which makes them significantly more dangerous.

A service that crashes loudly is immediately visible. A service that continues running while serving corrupted data can go undetected for hours or days, during which time the corruption propagates through downstream systems and potentially into user-facing data stores.

The Google SRE Book recommends out-of-band data validation pipelines — map-reduce jobs or background validation agents that continuously verify data integrity against metadata-aware rules — specifically to catch this category of failure before it reaches users. This is the kind of proactive detection that transforms potential catastrophic data corruption events into routine maintenance tasks.

Integration Brittleness

Breaches of API contracts, third-party vendor service outages, and rigid structural assumptions about payload parameter ordering. Research on fault characterization in complex agentic ecosystems shows that integration failures account for approximately 19.5% of all root cause failures.

Integration brittleness is particularly prevalent in modern microservices architectures because every service boundary is a potential failure point. When Service A makes a synchronous HTTP call to Service B, A’s availability becomes dependent on B’s availability. If B calls C, A’s availability becomes dependent on C. In a sufficiently complex system, a single third-party analytics provider going offline can cascade into a complete application outage — even if that provider was theoretically “non-critical.”

Resource Exhaustion

CPU starvation, memory leaks, database connection pool depletion, and operating system file descriptor limits. These failures are typically triggered by user growth spikes or internal retry storms — situations where the system receives more load than its resource allocation was designed to handle.

Resource exhaustion failures are particularly dangerous because they tend to trigger the black hole effect described earlier. An exhausted service slows down. Slow responses cause clients to retry. Retries increase load. Increased load makes the service slower. The feedback loop accelerates until the service is completely unresponsive.

Crash Resilience Engineering Classification Framework at a Glance

| Category | Common Symptoms | Blast Radius | Priority | Mitigation Strategy |

|---|---|---|---|---|

| Infrastructure | Widespread connection drops, DNS timeouts, zone outages | Critical | Highest | Active-active redundancy, fail-open routing, GameDay testing |

| Logic | Unhandled exceptions, null pointers, post-release spikes | Moderate to High | High | Canary deployments, automated rollbacks, CI/CD guardrails |

| Data/Semantic | Silent corruption, deserialization errors, deadlocks | Severe (delayed) | Critical | Out-of-band validation, contract testing, schema versioning |

| Integration | API contract failures, third-party outages | High | High | Circuit breakers, bulkhead isolation, async message queues |

| Resource Exhaustion | Timeouts, memory leaks, connection pool depletion | Critical | Highest | Rate limiting, load shedding, exponential backoff |

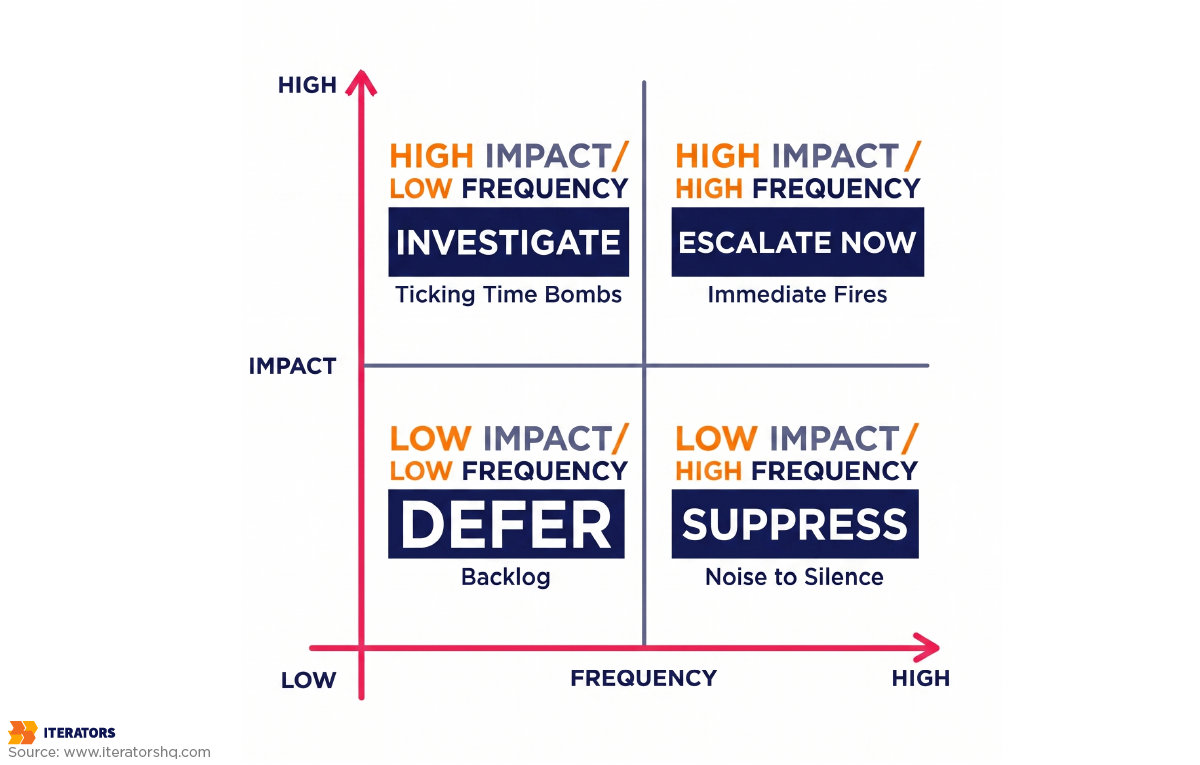

Prioritization: Impact vs. Frequency

One of the most common mistakes in crash management is prioritizing by frequency rather than impact. The crash that fires 500 alerts per day might be a benign edge case affecting a tiny percentage of users. The crash that fires 3 alerts per week might be silently corrupting payment records.

Crash resilience engineering demands a 2×2 prioritization matrix: frequency on one axis, business impact on the other. High-frequency, high-impact crashes are your immediate fires. Low-frequency, high-impact crashes are your ticking time bombs — the ones that keep experienced engineers up at night. High-frequency, low-impact crashes are noise that needs to be silenced so you can see the signal. Low-frequency, low-impact crashes go in the backlog.

The business impact dimension requires input from product and business stakeholders, not just engineering. A crash in the checkout flow has 10x the business impact of an identical crash in the user profile settings page. Classification frameworks that ignore this context produce technically accurate but strategically useless prioritization.

Building Resilience: Design Patterns That Prevent Crashes

Once you’ve classified your failures, you need structural countermeasures. These are the architectural patterns that prevent localized failures from cascading into global outages. Think of them as your system’s immune system — not preventing every individual pathogen, but preventing any single infection from killing the host.

The Circuit Breaker Pattern in Crash Resilience Engineering

The Circuit Breaker is the single most important pattern in crash resilience engineering. Research from Usenix SREcon has shown it can reduce cascading production failures by 83.5% when properly implemented. That’s not a small number.

Here’s the problem it solves. In a distributed microservices environment, when a downstream service experiences high latency or repeated timeouts, the upstream service will traditionally continue attempting to connect. Each attempt consumes a thread. The thread pool fills up. New requests can’t be processed. The upstream service crashes — not because of its own code, but because a downstream dependency took it down.

The Circuit Breaker is implemented on the consumer side. It monitors the failure rate of downstream calls. When that rate exceeds a configured threshold, the breaker “trips.”

The pattern operates as a state machine with three states:

Closed (Normal Operation): Requests flow through normally. The circuit breaker monitors failure rates in the background.

Open (Failure Mode): The breaker has tripped. All subsequent calls are immediately rejected without attempting the network connection. The service “fails fast” and returns a predefined fallback response. This protects the struggling downstream service from being bombarded with additional traffic, giving it computational breathing room to recover.

Half-Open (Recovery Testing): After a configured timeout period, the breaker allows a small number of test requests through. If they succeed, it resets to Closed. If they fail, it trips back to Open and restarts the timeout clock.

The fail-fast behavior is critical. An immediate failure is dramatically easier to manage than an infinitely hanging request. When a service fails fast, upstream services can immediately execute fallback logic and continue serving users. When a service hangs indefinitely, it consumes threads and resources until everything upstream eventually times out too.

The key configuration decisions are the failure rate threshold (how many failures before tripping), the open state duration (how long to wait before testing recovery), and the half-open call count (how many test calls to allow). These should be tuned based on your specific service’s SLA requirements and historical failure patterns.

The Bulkhead Isolation Pattern

While the Circuit Breaker protects you from making doomed outbound requests, the Bulkhead Isolation pattern protects you from being overwhelmed by incoming traffic.

The pattern is named after the watertight compartments in ship hulls. If one compartment floods, the bulkheads contain the water and the ship stays afloat. Without bulkheads, a single breach sinks everything.

In software, bulkheads segment application resources — thread pools, connection pools, memory allocations — into isolated compartments dedicated to specific operations. Without bulkheads, a traffic spike to one endpoint can consume the application’s entire global thread pool, starving every other endpoint and crashing the entire service.

With bulkheads, if one service partition experiences resource exhaustion, the damage is contained within that compartment. The rest of the application keeps running.

The most powerful crash resilience implementations combine both patterns: wrap each service call within a Bulkhead isolation compartment that also contains a Circuit Breaker. The Bulkhead prevents resource exhaustion from spreading. The Circuit Breaker prevents repeated failed network attempts from consuming those resources in the first place.

Timeouts, Retries, and Exponential Backoff

Every network call and database query must have a timeout. This is non-negotiable in crash resilience engineering. An unconstrained call that hangs indefinitely is a resource leak waiting to become a catastrophic failure.

But timeouts alone aren’t enough. When a timeout fires, naive retry logic can be catastrophic. If a service briefly drops offline and ten thousand clients immediately retry at the exact same millisecond, you’ve effectively launched a self-inflicted DDoS attack against your recovering infrastructure. This is the retry storm problem, and it’s responsible for a significant proportion of recovery-phase crashes — situations where a service was coming back online but got crushed by synchronized retry traffic before it could fully recover.

The solution is exponential backoff with jitter.

Exponential backoff systematically increases the wait duration between retry attempts. First retry waits 1 second. Second retry waits 2 seconds. Third waits 4 seconds. This prevents the synchronized retry storm by spacing out retry attempts over time.

Jitter adds a randomized variable to the wait times. Instead of every client waiting exactly 4 seconds before the third retry, clients wait between 3 and 5 seconds (or some similar randomized range). This disperses retry attempts across a temporal window, preventing even the exponential backoff pattern from creating synchronized load spikes.

The core logic follows this pattern: for each retry attempt, calculate an exponentially increasing base delay, then add a small random jitter component (typically 10% of the delay), and wait that combined duration before the next attempt. After a maximum number of retries, the operation fails definitively rather than retrying forever.

Graceful Degradation and Fallback Paths

The ultimate expression of crash resilience engineering is designing systems that fail gracefully rather than catastrophically. When a peripheral service crashes, your core business functionality should continue operating — just with reduced capability rather than complete unavailability.

This requires explicitly designing fallback paths for every critical user journey. What happens when the recommendation engine crashes? Serve popular items. What happens when the personalization service crashes? Serve generic content. What happens when the real-time inventory check times out? Show “check availability” rather than blocking checkout.

The key insight is that users can tolerate degraded experiences far better than complete failures. A Netflix that serves slightly less personalized recommendations is dramatically better than a Netflix that returns a 500 error. A checkout flow that can’t apply a coupon code in real-time is dramatically better than a checkout flow that hangs indefinitely.

Designing fallback paths requires upfront product thinking about which features are core to the user journey and which are enhancements. This is a conversation that needs to happen between engineering and product — and it needs to happen before a 2:47 AM incident, not during one.

Resilience Pattern Comparison

| Pattern | Protects Against | When to Use | Complexity |

|---|---|---|---|

| Circuit Breaker | Downstream service failures cascading upstream | Any synchronous service-to-service call | Medium |

| Bulkhead Isolation | Resource exhaustion from traffic spikes | High-traffic services with multiple operation types | Medium |

| Exponential Backoff + Jitter | Retry storms during recovery | Any operation with retry logic | Low |

| Graceful Degradation | Peripheral service failures impacting core journeys | User-facing features with non-critical dependencies | High |

| Rate Limiting | Intentional or accidental traffic overload | Public APIs and shared internal services | Low-Medium |

| Load Shedding | Resource exhaustion under extreme load | Services with hard resource constraints | Medium-High |

The Right Data: What Crash Telemetry Should Tell You

You can have every design pattern in this article implemented perfectly and still be flying blind if your telemetry is wrong. Crash resilience engineering is only as good as the data feeding it.

Beyond Crash Count: The Metrics That Actually Matter

Most teams track crash count. Some teams track crash rate (crashes per session or per request). These are necessary but insufficient.

The metrics that drive architectural decisions in crash resilience engineering are:

Crash-Free Session Rate (CFS): The percentage of user sessions that complete without a crash. This is the metric that app stores use to rank applications. Research shows that applications maintaining a CFS rate above 99.95% consistently achieve ratings above 4.5 stars. Applications that drop below 99.70% see ratings plunge below 3.0 stars. The threshold matters: at 99.70% CFS, negative user reviews begin to cascade. Below that threshold, you’re in active brand damage territory.

Mean Time to Recovery (MTTR): How long it takes from the moment a crash is detected to the moment it’s resolved. MTTR is a lagging indicator of your team’s resilience capabilities. Tracking MTTR over time tells you whether your crash resilience investments are actually paying off. If MTTR isn’t decreasing, your interventions aren’t working.

Blast Radius Measurement: What percentage of users or services were affected by a given crash? A crash that affects 0.01% of users is categorically different from one that affects 40% of users, even if the underlying exception is identical. Blast radius measurement requires correlating crash telemetry with user session data and service dependency maps.

Repeat Crash Rate: What percentage of crashes are recurring failures that your team has seen before? A high repeat crash rate indicates that your post-mortem process isn’t producing structural fixes — you’re applying band-aids rather than addressing root causes.

Time to Detection: How long between a crash first occurring and your team being alerted? Crashes that affect users for minutes before triggering alerts are fundamentally different from crashes that are detected in seconds. Time to detection is where observability investment pays off most directly.

The Four Golden Signals Applied to Crash Resilience Engineering

Google’s SRE framework defines four golden signals for monitoring distributed systems. Applying them specifically to crash resilience engineering:

Latency — Track separately for successful and failed requests. A service where 99% of requests complete in 50ms but 1% hang for 30 seconds has a latency profile that looks fine in aggregate but is causing massive user-facing problems. Crash resilience engineering requires percentile-based latency tracking (P50, P95, P99, P99.9) rather than averages.

Traffic — Absolute demand on the system. Correlating traffic spikes with crash events is how you identify resource exhaustion failures. If crashes consistently appear when traffic crosses a specific threshold, you’ve found a capacity limit that needs to be addressed architecturally.

Errors — Both explicit failures (HTTP 5xx responses) and implicit failures (requests that succeed but return incorrect results). The implicit failures are the dangerous ones — they’re the silent data corruption events that escape traditional error monitoring.

Saturation — How close your most constrained resources are to their limits. Database connection pool utilization, memory usage, CPU saturation, file descriptor counts. Saturation metrics are your early warning system for resource exhaustion crashes. If connection pool utilization is consistently at 85%, you’re one traffic spike away from exhaustion.

Instrumenting for Resilience

Effective crash telemetry requires instrumentation that was designed with crash resilience in mind — not just application performance monitoring bolted on after the fact.

Every service boundary should emit:

- Request count and failure rate

- Latency distribution (not just average)

- Circuit breaker state transitions

- Retry attempt counts and outcomes

- Resource utilization for constrained resources

Every crash should capture:

- Full stack trace with symbolication (for mobile applications)

- User session context (what the user was doing when the crash occurred)

- System state at time of crash (memory usage, active connections, recent deployments)

- Correlation IDs linking frontend crashes to backend distributed traces

The correlation between frontend and backend is particularly valuable. Observability platforms like Datadog and Honeycomb allow teams to link a mobile application crash to a specific backend API response — revealing that what appeared to be a client-side crash was actually the downstream symptom of an exceeded rate limit in a backend microservice. Without that correlation, teams waste hours debugging the wrong layer of the stack.

Modern observability platforms automatically aggregate subsequent errors with similar stack traces and messages, reducing cognitive load during incident response. They also provide symbolication for mobile crash reports — translating obfuscated memory addresses back into human-readable code using dSYM or Proguard mapping files — which can reduce MTTR for mobile engineering teams by hours.

Connecting Crashes to Business Impact

The final piece of crash telemetry strategy is connecting technical metrics to business outcomes. Engineering leadership needs to be able to walk into a board meeting and say “our investment in crash resilience engineering protected $X in revenue last quarter” — not “we reduced our P99 latency by 40ms.”

This requires instrumenting your crash telemetry to capture business-relevant context:

- Which user segment experienced the crash (free vs. paid, new vs. returning)?

- What was the user attempting to accomplish (checkout, onboarding, core workflow)?

- What is the estimated revenue impact of crashes in this user journey?

With this context, you can calculate that a crash affecting 2% of checkout sessions costs approximately $Y per hour based on your average order value and conversion rate. That number makes crash resilience engineering a revenue protection conversation, not a technical debt conversation.

From Firefighting to Engineering: The Cultural Shift

Technical patterns and telemetry frameworks are necessary but insufficient for crash resilience engineering. The cultural transformation is equally important — and significantly harder.

The Reactive Firefighting Trap

Traditional software incident management is a reactive cycle. Alert fires. War room assembles. Engineers scramble to diagnose under pressure. Root cause found. Band-aid applied. Deployment pushed. Everyone goes home exhausted. Three weeks later, a similar crash fires. The cycle repeats.

This approach generates what the Google SRE framework calls “toil” — manual, repetitive work that scales linearly with system complexity and provides no enduring architectural value. The 2025 SRE Report from Catchpoint found that toil reduction is the top priority for SRE teams globally, precisely because organizations recognize that reactive firefighting is consuming engineering capacity that should be invested in structural resilience.

The economic argument against reactive firefighting is overwhelming. The average minute of downtime across global organizations costs $14,056. Fortune 500 companies can exceed $5 million in direct costs from a single critical incident. Investing in crash resilience engineering to prevent those incidents delivers ROI that makes most product feature investments look modest by comparison. Research evaluating Chaos Engineering programs demonstrates a 245% ROI. Implementing Circuit Breaker patterns alone reduces cascading failures by 83.5%.

The Blameless Post-Mortem

The cultural foundation of crash resilience engineering is the blameless post-mortem. This is not a novel concept — Google’s SRE framework has advocated for it for years — but it’s still poorly implemented in most organizations.

A blameless post-mortem operates on the premise that engineers make the best decisions available to them given the information, tools, and time pressure they had at the moment of the incident. The goal is not to identify who made a mistake, but to identify what systemic conditions made that mistake possible — and then fix those conditions.

The blameless post-mortem template that drives architectural improvements asks different questions than traditional incident reviews:

Traditional (blame-oriented):

- Who deployed the change that caused the crash?

- Why didn’t they test it properly?

- How do we prevent this person from making this mistake again?

Crash resilience engineering (system-oriented):

- What architectural conditions allowed this failure to propagate as far as it did?

- What monitoring gaps delayed detection?

- What documentation or knowledge gaps made diagnosis harder?

- What structural changes would make this class of failure impossible or automatically contained?

The last question is the crucial one. Every post-mortem should produce at least one architectural improvement — not just a process change or a reminder to be more careful. If the post-mortem concludes with “the engineer should have tested more thoroughly,” you’ve failed. If it concludes with “we’re implementing automated rollback triggered by error rate thresholds within 5 minutes of deployment,” you’ve succeeded.

Proactive Resilience: The Engineering Mindset

Charity Majors, a prominent thought leader in observability and SRE, articulates the proactive resilience mindset with characteristic directness: “If it is a predictable problem, then why haven’t you automated the fix?”

This question is the north star of crash resilience engineering. If your team knows that database connections are flaky under load, manually monitoring those connections and responding to alerts is a waste of human capital. The structural fix — a Circuit Breaker, a connection pool with proper sizing and timeout configuration — should make human intervention unnecessary for that specific failure mode.

The Erlang runtime environment, which powers systems at WhatsApp and Ericsson with extraordinary reliability, is built on exactly this philosophy: “things are only done when they’re done, and you should expect failures at any time.” Erlang’s supervisor tree architecture automatically restarts crashed processes, isolates failures, and maintains system operation without human intervention. The engineering mindset that produced Erlang is the same mindset that crash resilience engineering tries to instill in teams working with any technology stack.

This philosophy also informs how you configure monitoring. In a well-designed Kubernetes environment, individual pod crashes should not page an on-call engineer. The orchestration layer is designed to restart pods automatically. Alerting a human every time a pod crashes is alert fatigue in the making. The correct monitoring philosophy focuses on persistent, user-facing symptoms — “user-facing error rate has exceeded 1% for more than 5 minutes” — not on implementation-level events that the system is designed to handle automatically.

Building the Learning Loop

The sustainable version of crash resilience engineering is a learning loop: every production incident produces structural improvements that make the next incident less likely and less severe. Over time, this loop builds an organization that is genuinely antifragile — one that gets stronger from encountering failures rather than merely surviving them.

Building this loop requires:

Systematic knowledge capture: Every incident, post-mortem, and architectural decision must be documented. The cost of organizational knowledge loss is staggering — research shows that up to 50% of corporate knowledge can’t be found centrally, and employees spend over 100 minutes daily searching for information they need. In crash resilience engineering, undocumented incident history means teams repeatedly diagnose the same failure modes from scratch. Proper documentation practices are a prerequisite for effective crash resilience.

Metrics that track improvement: Repeat crash rate, MTTR trend, and blast radius reduction over time are the metrics that tell you whether your crash resilience investments are compounding. If repeat crash rate isn’t decreasing quarter over quarter, your post-mortem process isn’t generating structural fixes.

Technical debt management: Technical debt is the primary driver of system fragility. Unmanaged technical debt directly exacerbates integration brittleness and makes every category of crash harder to contain. Martin Fowler’s Technical Debt Quadrant — categorizing debt as Deliberate, Inadvertent, Prudent, or Reckless — provides the framework for strategic prioritization. Companies like Facebook dedicate entire months (“hack-a-month”) to debt reduction rather than treating it as an afterthought.

Structural Improvements: Turning Data Into Better Architecture

The culmination of crash resilience engineering is using crash data to make architectural decisions — not just fixing the bug that caused the last crash, but redesigning the system so that entire categories of failures become impossible or automatically contained.

Pattern Recognition Across Incidents

The classification framework described earlier becomes most powerful when applied across multiple incidents over time. If you’ve had three integration brittleness failures in the last quarter, all involving synchronous calls to the same third-party payment provider, that’s not three separate bugs — that’s an architectural pattern telling you to implement a Circuit Breaker and async fallback for that specific integration.

Aggregating crash data by category over time reveals:

- Which failure categories are most prevalent in your specific system

- Which categories are increasing vs. decreasing in frequency

- Which architectural investments have produced measurable improvement

- Where technical debt is accumulating risk

This analysis should feed directly into architectural roadmap planning. If resource exhaustion failures have increased 40% in the last two quarters while traffic has grown 20%, your capacity planning model is broken and needs to be redesigned before the next growth spike.

The Before/After Architecture Mindset

Every significant architectural improvement in crash resilience engineering should be documented as a before/after comparison. Not just “we added a Circuit Breaker” but “here’s what the system looked like when Service A called Service B synchronously, here’s what the blast radius of a Service B failure looked like, here’s the new architecture with Circuit Breaker and async fallback, here’s the measured reduction in blast radius.”

This documentation serves multiple purposes. It builds organizational knowledge about which architectural patterns work in your specific context. It provides evidence for the ROI of crash resilience investments. It helps new engineers understand the reasoning behind architectural decisions rather than treating them as arbitrary constraints.

Chaos Engineering: Proactive Failure Injection

Once you have solid telemetry and architectural patterns in place, the next frontier of crash resilience engineering is proactive failure injection — deliberately introducing failures into your system to validate that your resilience patterns actually work under real conditions.

Netflix’s Chaos Engineering approach, documented in their FIT (Failure Injection Testing) framework, is the canonical example. The methodology involves three steps: formulate a hypothesis about how the system will behave under a specific failure condition, strictly contain the blast radius of the experiment, and execute the fault to observe whether the hypothesis holds.

Research on Chaos Engineering outcomes shows that actively running reliability tests on any given application leads to a 30% reduction in critical incidents for that application annually. The ROI of Chaos Engineering programs is estimated at 245% — an extraordinary return that reflects the high cost of unplanned outages compared to the relatively modest cost of structured resilience testing.

The key principle is blast radius containment during experiments. You don’t start by taking down your entire production database. You start by injecting a 100ms latency increase into a single non-critical service in a staging environment and verifying that your Circuit Breaker trips correctly and your fallback path serves appropriate content. You expand the scope of experiments gradually as you build confidence in your resilience patterns.

Advanced Chaos Engineering extends to disaster recovery validation — testing whether your multi-region failover actually works during a simulated cloud provider zone outage, or whether your database backup restoration process achieves the Recovery Time Objectives and Recovery Point Objectives specified in your SLAs. These tests are uncomfortable to run. They’re also the only way to know whether your disaster recovery plan is real or theoretical.

Measuring Improvement Over Time

The final piece of crash resilience engineering is measurement. You need to know whether your investments are working.

The core measurement framework:

Crash-Free Session Rate trend: Is this number increasing quarter over quarter? If you’re at 99.7% and your target is 99.95%, what’s the trajectory?

MTTR trend: Is your mean time to recovery decreasing? If incidents that took 4 hours to resolve last year now take 45 minutes, that’s a measurable improvement in organizational resilience capability.

Repeat crash rate: What percentage of incidents are recurring failures? A decreasing repeat crash rate indicates that your post-mortem process is producing structural fixes.

Blast radius distribution: Are your failures becoming more localized over time? If your architectural investments in bulkhead isolation and Circuit Breakers are working, you should see a shift from large-blast-radius incidents to small-blast-radius incidents.

Time to detection: Is your observability improving? Decreasing time to detection means users are experiencing problems for less time before your team responds.

Connecting these metrics to business outcomes — revenue protected, customer churn prevented, SLA compliance maintained — transforms crash resilience engineering from a technical discipline into a business strategy. This is the conversation that gets engineering leadership the investment needed to do this work properly.

Implementation Roadmap: Getting Started

Crash resilience engineering is a journey, not a destination. You don’t implement everything in this article in a sprint. You build capabilities systematically, starting with the highest-impact investments and expanding from there.

Phase 1: Baseline and Classification (Weeks 1-4)

Before you can improve, you need to understand where you are.

Instrument for the Four Golden Signals. If you don’t have latency, traffic, errors, and saturation metrics for every service, start here. This is your foundation. Without it, everything else is guesswork.

Establish crash classification. Take your last 30 days of incidents and classify each one using the five-category framework. This exercise alone will reveal patterns you didn’t know existed and identify which categories dominate your incident profile.

Calculate your baseline metrics. Crash-free session rate, MTTR, repeat crash rate, time to detection. Write these numbers down. They’re your starting point.

Map your dependency graph. Not the architecture diagram from two years ago — the actual dependency graph, discovered through distributed tracing. Every synchronous service-to-service call is a potential failure propagation path.

Phase 2: Quick Wins (Weeks 5-12)

Implement Circuit Breakers on your highest-risk integrations. Based on your dependency map, identify the three to five integrations that have caused the most blast-radius failures. Wrap them in Circuit Breakers with fallback paths. This is the single highest-ROI investment in crash resilience engineering.

Add timeout constraints to all network calls. Every HTTP call, every database query, every external API request needs a timeout. No exceptions. This eliminates the infinite-hang failure mode entirely.

Implement exponential backoff with jitter for retry logic. Review every place in your codebase where retry logic exists. Replace fixed-delay retries with exponential backoff plus jitter.

Run your first blameless post-mortem. Pick a recent incident and run a post-mortem using the system-oriented question framework. Practice the cultural shift in a low-stakes context.

Phase 3: Structural Improvements (Months 3-6)

Implement bulkhead isolation for high-traffic services. Identify services where a single endpoint type could exhaust shared resources and implement thread pool isolation.

Build out-of-band data validation. For any service handling critical data, implement background validation that continuously checks data integrity against business rules.

Establish canary deployment practices. Route a small percentage of traffic to new deployments before full rollout. Instrument automatic rollback triggered by error rate thresholds.

Address your top technical debt items. Based on your crash classification data, identify which technical debt items are contributing most to your incident profile. Prioritize these for remediation.

Phase 4: Proactive Resilience (Months 6-12)

Begin Chaos Engineering experiments. Start in staging with small-blast-radius experiments. Validate that your Circuit Breakers trip correctly. Test your fallback paths under real failure conditions.

Implement predictive monitoring. Use saturation metrics to predict resource exhaustion before it happens. Set alerts at 70% utilization rather than 95%.

Build organizational muscle memory. Run regular GameDays — structured exercises where the team practices incident response under simulated failure conditions. The goal is that real incidents feel familiar, not panicked.

Establish quarterly resilience reviews. Review your core metrics, identify the highest-risk failure modes in the next quarter, and plan architectural investments accordingly.

FAQ

What’s the difference between crash resilience engineering and traditional software quality assurance?

Software quality assurance focuses on preventing defects from reaching production through testing, code review, and process controls. Crash resilience engineering accepts that some defects will reach production — because in complex distributed systems, they always do — and focuses on designing systems that contain failures, recover automatically, and degrade gracefully rather than crashing catastrophically. They’re complementary disciplines. QA reduces the frequency of failures; crash resilience engineering reduces the impact of the failures that slip through.

How do you justify the investment in crash resilience engineering to non-technical leadership?

The business case is straightforward when you connect crashes to revenue impact. The average minute of enterprise downtime costs $14,056. Fortune 500 companies can exceed $5 million per critical incident. Chaos Engineering programs deliver 245% ROI. Circuit Breaker implementation reduces cascading failures by 83.5%. Frame crash resilience engineering as revenue protection and competitive differentiation — not as technical debt reduction.

What’s the most common mistake teams make when starting crash resilience engineering?

Trying to do everything at once. Teams read an article like this one, get excited, and immediately try to implement Circuit Breakers, Bulkheads, Chaos Engineering, and blameless post-mortems simultaneously. The result is organizational overwhelm and partial implementations that don’t deliver value. Start with baseline instrumentation and classification. Add Circuit Breakers on your highest-risk integrations. Run one blameless post-mortem. Build from there.

How do you handle the tension between shipping velocity and crash resilience investments?

Paradoxically, crash resilience engineering enables faster shipping velocity over time. Teams that spend 40% of their capacity on reactive firefighting have 40% less capacity for feature development. As crash resilience investments reduce incident frequency and severity, that capacity is freed for product development. The short-term investment pays back in long-term velocity. Teams that have implemented mature crash resilience practices consistently report higher deployment frequency and lower change failure rates.

What role does technical debt play in crash resilience?

Technical debt is the primary driver of system fragility. Unmanaged technical debt directly exacerbates every crash category — it makes logic failures harder to diagnose, integration brittleness harder to contain, and resource exhaustion harder to predict. Crash resilience engineering and technical debt management are deeply intertwined. Using crash classification data to identify which technical debt items are generating the most incident risk is the most effective way to prioritize debt remediation.

When should we consider bringing in external help for crash resilience engineering?

When your team is spending more than 25-30% of its capacity on reactive incident response, when you’re experiencing repeat failures in the same categories despite post-mortem efforts, or when a critical system needs resilience improvements faster than your internal capacity allows. External teams bring pattern recognition from working across many systems and architectures — they’ve seen your failure modes before, in different contexts, and know which interventions work. Software development consulting can stabilize immediate crises, while longer-term resilience partnerships can systematically transform your architectural foundation.

Conclusion: From Chaos to Confidence

Here’s what crash resilience engineering ultimately delivers: the ability to sleep through Tuesday nights.

Not because your system never fails — it will. Every sufficiently complex system does. But because when it fails, the failure is contained, detected quickly, recovered automatically where possible, and understood structurally rather than treated as a mysterious anomaly.

The journey from reactive firefighting to proactive resilience engineering isn’t quick. It requires investment in instrumentation, architectural patterns, cultural practices, and organizational learning loops. But the ROI is extraordinary — both financially, in terms of downtime costs avoided, and organizationally, in terms of engineering capacity freed from toil and redirected toward building things that matter.

The teams that master crash resilience engineering don’t just have fewer outages. They have faster feature development, higher deployment frequency, lower change failure rates, and engineering cultures where talented people want to work because they’re building things that last rather than constantly firefighting things that break.

Start with the classification framework. Understand your failure patterns. Implement Circuit Breakers on your highest-risk integrations. Run a blameless post-mortem. Build from there.

The 2:47 AM war room is optional. You just have to choose not to need it.

Need help building crash resilience into your production systems? Iterators has battle-tested experience with emergency incident response and long-term resilience architecture across mobile, backend, and infrastructure layers. Schedule a free consultation with us to talk about where to start.