Your investors want to see performance improvements in two weeks. This is deadline performance stabilization in its most brutal form—and your team wants to rewrite the entire architecture.

You’re caught in the middle, and the clock is ticking.

This is the moment that separates engineering leaders who survive funding rounds from those who spend the next quarter explaining why the demo crashed in front of the board. Deadline performance stabilization isn’t a technical problem—it’s a prioritization problem dressed in technical clothing.

Most teams fail not because they lack skill or tooling. They fail because they spend the first week setting up perfect monitoring dashboards while three known, catastrophic bottlenecks continue eating their users alive. They fail because the most intellectually interesting bug in the codebase gets three days of attention while an unindexed database query silently destroys every page load. They fail because someone convinces leadership that a complete rewrite is the “only real solution”—and fourteen days later, the system is worse than when they started.

This article gives you a battle-tested framework for delivering measurable performance improvements when failure means lost investment, customer churn, or a blown SLA. We’re going to cover the psychology of why smart teams fix the wrong things, a systematic triage framework built specifically for deadline-constrained environments, how to balance emergency patches with long-term architectural sanity, and—critically—how to translate your technical wins into the financial language that investors and executives actually trust.

No theoretical advice. No “consider implementing a distributed caching layer for improved throughput.” Just the stuff that works when the board meeting is in fourteen days and your checkout API is responding in 3.2 seconds.

Free Consultation

Two Weeks. Real Fixes.

A Board That Stays Confident.

Our senior engineers will triage your bottlenecks, separate the Quick Wins from the Money Pits, and execute the fixes that protect revenue before your deadline — not after it.

Start Your Stabilization Sprint →Why Smart Teams Fix the Wrong Things During Deadline Performance Stabilization

Here’s a pattern that plays out with uncomfortable regularity in engineering organizations facing deadline performance stabilization work.

Day one: leadership declares a performance emergency. The engineering team assembles. Someone pulls up the APM dashboard. Someone else opens a profiler. Within hours, a list of performance issues is on the whiteboard—ranging from a catastrophic N+1 database query affecting every single page load to a mildly inefficient animation on a secondary settings screen that approximately 200 users have ever visited.

Then the team starts working.

By day three, three engineers are deep in the animation problem. It’s genuinely interesting. It involves React’s reconciliation algorithm, some subtle state management issues, and a clever optimization pattern that nobody on the team has tried before. Meanwhile, the N+1 query—which is causing a 2.8-second delay on every product page, affecting 100% of users, and directly tanking conversion rates—sits untouched.

This isn’t stupidity. It’s human nature colliding with engineering culture in the worst possible way.

Engineer Curiosity vs. Business Impact in Performance Work

The engineering mind is drawn to interesting problems. This is a feature, not a bug—it’s what drives innovation, keeps developers engaged, and produces genuinely creative solutions to hard problems. But under deadline performance stabilization pressure, curiosity becomes a liability.

When time is unlimited, optimizing the interesting problem is fine. You’ll get to the boring N+1 query eventually. When time is two weeks, spending three days on a problem that affects 0.1% of users while ignoring one that affects 100% of users is catastrophic.

The research on this is clear. Cognitive tunneling under stress causes people to default to familiar, comfortable tasks rather than strategically important ones. When the neurochemical cocktail of deadline pressure kicks in—cortisol, adrenaline, the whole package—engineers retreat to what they know, what they find engaging, and what feels productive even when it isn’t. The result is a team working extremely hard on exactly the wrong things.

The antidote isn’t motivation. It’s a framework that removes the option to work on the wrong things in the first place.

The “Perfect Monitoring” Trap That Kills Stabilization Efforts

Second most common failure mode: the team insists they cannot begin optimization without perfect, high-granularity observability data.

This sounds reasonable. You should measure before you optimize. You shouldn’t fly blind. These are real engineering principles championed by performance monitoring experts.

But there’s a version of this that becomes paralyzing. The team spends the first ten days of a fourteen-day sprint installing Datadog, configuring distributed tracing, building custom dashboards, setting alerting thresholds, and debating the correct cardinality for their metrics. By the time they have beautiful observability data, they have four days left to actually fix anything.

As Brendan Gregg—one of the most respected performance engineers in the industry—has noted, “Late optimization is the root of all evil. But even worse is blind optimization without measurements.” The key word is “blind.” If your system is crashing daily due to memory exhaustion in a specific microservice, you don’t need a sophisticated dashboard to tell you to fix the memory leak. You need to stabilize the service immediately.

The goal for deadline performance stabilization is: measure what matters, fix what hurts, then elaborate your measurement infrastructure once the system is stable enough to survive the elaboration.

Architectural Purity vs. Tactical Wins Under Pressure

The third failure mode is the most seductive: the “rewrite everything” temptation.

Legacy code is frustrating. It’s undocumented, inconsistent, and full of decisions that made sense in 2017 and make no sense today. When a developer stares at a slow, tangled codebase, the urge to burn it down and build something clean is overwhelming. And they’ll make a compelling case: “The only real fix is to refactor this entire module. Anything else is just putting a bandage on a gunshot wound.”

This argument is sometimes correct—in a six-month roadmap. It is almost never correct in a two-week emergency requiring deadline performance stabilization.

Martin Fowler’s “Design Stamina Hypothesis” captures the tension precisely: high internal quality does accelerate future development, but achieving that quality requires time you don’t have right now. Rewriting a core system introduces massive functional risk, halts all other development, and guarantees a missed deadline. The question isn’t whether the rewrite is the right long-term answer. The question is whether it’s the right answer for the next fourteen days. It almost never is.

What you need instead is the discipline to implement imperfect, tactical solutions that stop the bleeding—and the documentation discipline to make sure those tactical solutions get properly refactored later. For organizations navigating legacy system modernization, understanding when to patch versus when to replace is critical.

A Framework for Deadline Performance Stabilization Based on Business Impact

The Performance Impact Matrix is a 2×2 grid that maps technical issues based on two axes: Business Impact (vertical) and Engineering Effort (horizontal). It sounds simple because it is. Its power comes not from complexity but from forcing the team to be honest about two things they normally avoid quantifying during deadline performance stabilization.

This framework strips away emotional bias, engineering curiosity, and the tendency to work on what’s familiar. When you plot your bottlenecks on this grid, the prioritization becomes mathematical rather than political.

Building Your Deadline Performance Stabilization Matrix

The four quadrants tell you exactly what to do:

HIGH IMPACT / LOW EFFORT (Quick Wins): These are your primary targets for the two-week sprint. They provide immediate, measurable relief and build stakeholder confidence. Execute these first, fast, and without debate.

HIGH IMPACT / HIGH EFFORT (Strategic Projects): These are critical for long-term viability but cannot be safely executed within deadline performance stabilization constraints without introducing catastrophic risk. Document them thoroughly and schedule them for the next sprint cycle.

LOW IMPACT / LOW EFFORT (Fill-Ins): Address these only if an engineer is blocked waiting for deployments or code reviews on Quick Wins. They’re not worth dedicated time, but they’re not harmful if done opportunistically.

LOW IMPACT / HIGH EFFORT (Money Pits): Discard. These are the “curiosity projects.” They consume massive resources for negligible business value. If someone is advocating for a Money Pit during a two-week emergency, that’s a prioritization conversation you need to have immediately.

Defining Business Impact for Performance Stabilization

“Business impact” cannot be measured in abstract technical terms during deadline performance stabilization. “Reduces CPU cycle time” is not a business impact. You need to translate every bottleneck into a measurable business outcome.

High business impact means:

- The issue affects more than 80% of your user base, or it sits directly on the revenue-generating pathway (checkout funnel, sign-up flow, primary dashboard load)

- The issue is directly causing SLA penalties, compliance violations, or is responsible for production outages

- Fixing it reduces latency by an order of magnitude on high-traffic endpoints—not 50ms, but 1,500ms

- The component is responsible for more than 50% of recent production incidents

Low business impact means:

- The issue affects a secondary feature, an admin panel, or a rarely-used settings screen

- The degradation is measurable only in controlled benchmarks, not in real user experience

- Fixing it would improve performance for less than 5% of your traffic

When you’re under deadline performance stabilization pressure, there is no middle ground. You’re either fixing something that materially affects user experience and business outcomes, or you’re not.

Estimating Engineering Effort for Fast Stabilization

Effort under deadline performance stabilization is not just about labor hours. It’s about time, risk, and reversibility.

Low effort means:

- Implementation, testing, and deployment can be completed within 2-5 days

- The change is isolated—it doesn’t touch core, highly-coupled legacy components

- It can be easily verified and rolled back if it causes unexpected issues

- Testing is straightforward and doesn’t require extensive manual regression across the entire platform

High effort means:

- The change requires altering core system architecture or tightly-coupled components

- Testing requires full regression testing across multiple systems

- Rollback is complex or impossible without significant additional work

- The implementation requires specialized knowledge that only one or two team members possess

Be honest about this during deadline performance stabilization. The tendency under deadline pressure is to underestimate effort—”we can definitely knock that out in two days”—and then watch two days turn into six as hidden complexity emerges. When in doubt, assume things will take longer than expected and plan accordingly.

Getting Stakeholder Alignment on Stabilization Priorities

Here’s the political reality: once you’ve plotted your bottlenecks on the Performance Impact Matrix, someone in leadership will disagree with the resulting prioritization. They’ll want a Strategic Project executed in the two-week window. They’ll have opinions about which Quick Wins are actually important. They’ll advocate for the Money Pit because it’s been on the roadmap for six months.

Your job as an engineering leader executing deadline performance stabilization is to use the matrix as an objective tool to redirect these conversations. When a stakeholder demands a Strategic Project within two weeks, the matrix allows you to say: “Here’s the business impact, here’s the engineering effort, here’s the risk profile. Executing this in two weeks means we accept a 40% probability of introducing a regression that makes things worse. Is that acceptable given the deadline?”

When the answer is framed that way, most reasonable stakeholders choose the Quick Wins.

The matrix turns a political argument into a risk conversation. That’s a much easier conversation to have during deadline performance stabilization.

Emergency Fixes vs. Long-Term Architecture in Deadline Performance Stabilization

Once you have your prioritized list of Quick Wins, you face the second major challenge of deadline performance stabilization: implementing tactical patches without creating technical debt that makes the system worse in three months.

This is a real tension. There’s no way to fully resolve it in a two-week emergency. The goal is to manage it intelligently.

Applying Martin Fowler’s Technical Debt Quadrant to Performance Work

Not all technical debt is created equal during deadline performance stabilization. Fowler’s Technical Debt Quadrant categorizes debt based on intent and awareness, and it’s an essential mental model for emergency performance work.

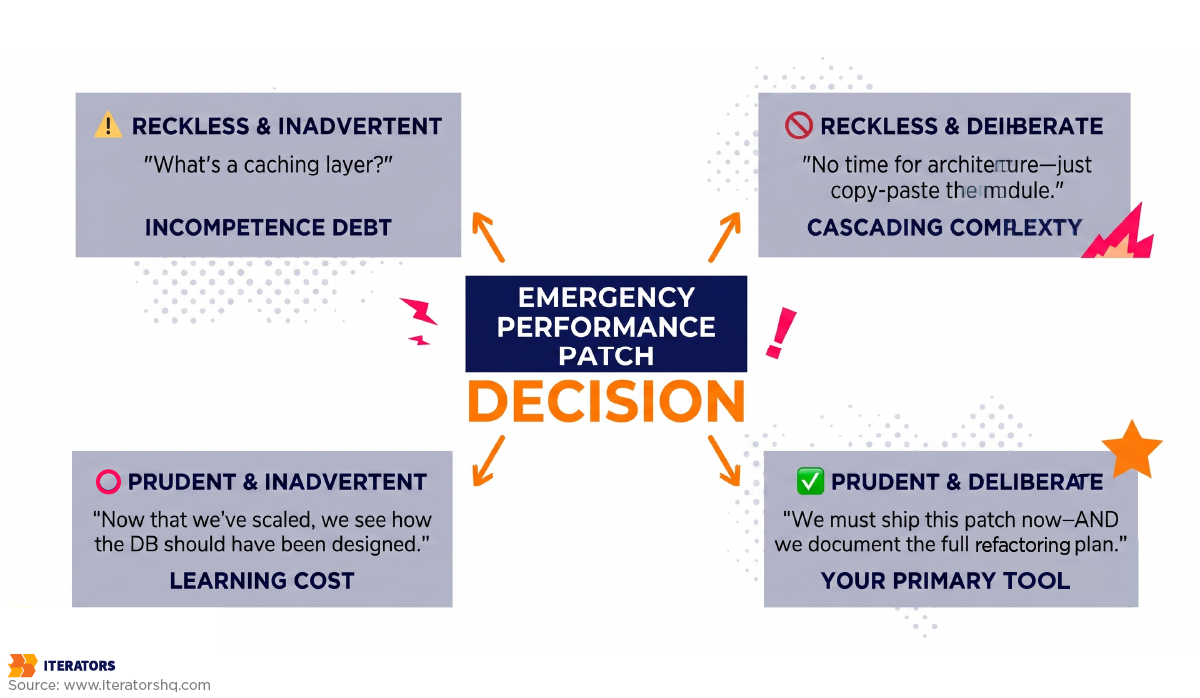

The quadrant has two dimensions: Reckless vs. Prudent (how thoughtful was the decision?) and Deliberate vs. Inadvertent (did you know you were incurring debt?).

Reckless & Inadvertent: “What’s a caching layer?” This debt comes from incompetence or lack of training. It produces unmaintainable spaghetti code and should be eradicated through team development and code review.

Reckless & Deliberate: “We don’t have time for architecture, just copy-paste the whole module.” Highly destructive. Creates immediate, cascading complexity that compounds rapidly.

Prudent & Inadvertent: “Now that we’ve scaled, we realize how we should have designed the database.” The unavoidable cost of learning—you couldn’t have known at the time.

Prudent & Deliberate: “We must ship this performance patch now to survive the demo, and we accept the consequences of refactoring it later.”

In deadline performance stabilization, Prudent and Deliberate debt is your primary tool. The key word is prudent. You’re making a conscious, documented decision to implement a fast, imperfect solution because the alternative—doing nothing, or doing the architecturally correct thing too slowly—is worse for the business.

The critical difference between Prudent and Deliberate debt and Reckless debt is documentation. If you implement a tactical patch and immediately create a ticket explaining exactly why it was implemented, what the technical debt is, and what the proper refactoring looks like, you’ve incurred manageable debt. If you implement the same patch and tell yourself “we’ll fix it later” with no documentation, you’ve incurred reckless debt that will be invisible until it breaks something six months from now.

When Quick Fixes Are Acceptable During Stabilization

A quick fix is acceptable—and often the correct engineering decision—under these specific deadline performance stabilization conditions:

The system is actively degrading or losing revenue. Business continuity overrides architectural elegance. If your checkout API is timing out and you can fix it today with a Redis cache that you’ll properly refactor in a month, you fix it today.

The fix is isolated. A tactical patch inside a self-contained microservice, or a caching layer in front of a slow database endpoint, is acceptable because the blast radius of the suboptimal code is contained. If the quick fix requires touching core shared infrastructure used by every service, it’s no longer a quick fix—it’s a high-effort, high-risk change that belongs in the Strategic Projects quadrant.

The debt is immediately documented. This is non-negotiable during deadline performance stabilization. The moment you merge a tactical patch, you create the corresponding technical debt ticket. It includes: why the tactical approach was chosen, what the proper long-term solution looks like, the estimated effort to implement that solution, and any risks introduced by the current patch.

You have a credible plan to pay it down. “We’ll fix it eventually” is not a plan. “We’ll allocate 20% of the next three sprints to refactoring this component” is a plan. If you can’t articulate when and how the debt will be addressed, you’re not incurring Prudent and Deliberate debt—you’re incurring Reckless debt with extra steps.

The Strangler Fig Pattern for Performance Stabilization

When the “rewrite everything” argument is technically correct but temporally impossible, the Strangler Fig pattern is often the right compromise for deadline performance stabilization.

Rather than rewriting a slow legacy component from scratch, you wrap it in a caching layer or an abstraction layer. 90% of requests get served from the fast wrapper. The slow legacy component still exists underneath but now handles only the 10% of requests that genuinely require fresh data. The system is stable. Users experience dramatically improved performance. And the legacy component can be properly replaced over the next several sprints without emergency pressure.

Shopify’s approach to Black Friday scaling illustrates this brilliantly. During the 2024 BFCM weekend, Shopify processed $11.5 billion in sales with peaks of $4.6 million per minute, maintaining 99.999% uptime. They didn’t achieve this by rewriting their Ruby on Rails application. They achieved this by implementing an aggressive edge caching strategy using Nginx and Lua, ensuring that less than 1% of total traffic ever reached the backend application. The backend Ruby application was reserved exclusively for genuinely uncacheable operations: sign-ins, profile updates, and the checkout funnel.

The lesson for deadline performance stabilization: you don’t need to fix the slow thing. You often just need to stop calling the slow thing for every request. For teams managing SaaS enterprise systems, this approach maintains reliability while buying time for proper refactoring.

Performance Traps That Waste Time During Deadline Stabilization

If the Performance Impact Matrix tells you what to work on during deadline performance stabilization, this section tells you what to actively avoid. These are the time-wasters that consume days of engineering effort without improving the experience of a single actual user.

Premature Optimization of Non-Bottleneck Code

This is Donald Knuth’s famous warning—”premature optimization is the root of all evil“—applied to deadline performance stabilization work. When engineers optimize code they’re familiar with or find interesting, rather than code that’s actually causing the bottleneck, they can spend days achieving microscopic improvements that have no measurable impact on user experience.

The classic example: shaving 10 milliseconds off a function that runs once per user session while ignoring a database query that runs 47 times per page load and takes 200 milliseconds each time. Fixing the function saves 10ms. Fixing the query saves 9.4 seconds. These are not equivalent problems.

The antidote is profiling before touching anything during deadline performance stabilization. Not profiling to build beautiful dashboards—profiling to answer one question: where is the time actually going? Run your profiler against real production traffic patterns, find the function or query consuming the most absolute time, and start there. Everything else is secondary until that bottleneck is resolved.

Do this instead: Use existing APM logs, database slow query logs, or a quick profiling session to identify the top three endpoints by absolute response time. Fix those first during deadline performance stabilization, regardless of how interesting or uninteresting the underlying problem is.

Over-Investing in Monitoring Before Fixing Known Issues

We covered this in the “Perfect Monitoring” trap, but it deserves its own entry because it’s so common and so damaging to deadline performance stabilization.

The scenario: the team knows the payment processing endpoint is slow. They’ve seen it in logs. Users have complained about it. The product manager has a spreadsheet showing abandoned carts. And yet, the team’s first action is to spend a week instrumenting the entire application with distributed tracing so they can see exactly how slow the payment endpoint is, with perfect granularity, before they attempt to fix it.

You already know it’s slow. You know which endpoint it is. You have enough data to start fixing it during deadline performance stabilization. The sophisticated observability infrastructure is valuable, but it’s valuable for finding the next set of problems after you’ve fixed the obvious ones.

Do this instead: Implement basic alerting on your known critical paths—response time thresholds, error rate alerts—so you’ll know immediately if a fix makes things worse. Build the sophisticated observability platform after the emergency has passed.

Perfectionism on Edge Cases Affecting Minimal Users

Under deadline performance stabilization pressure, perfectionism is a luxury you cannot afford. The desire to handle every edge case, support every obscure browser version, and ensure perfect behavior under every possible failure condition is admirable in normal development. In a two-week stabilization sprint, it’s a deadline killer.

If a performance fix works correctly for 99% of users but has a known edge case affecting users on a specific legacy browser version, ship the fix during deadline performance stabilization. Document the edge case. Fix it in the next sprint. The alternative—delaying the fix until it’s perfect—means 100% of users continue experiencing poor performance while you polish a solution for 1%.

Do this instead: Define an explicit “acceptable” threshold at the start of the sprint. Something like: “We will ship fixes that work correctly for 95% of users and handle the remaining cases in follow-up tickets.” This gives the team permission to move fast without feeling like they’re cutting corners during deadline performance stabilization.

The “Rewrite Everything” Temptation

We’ve covered this in the architectural section, but it’s worth restating as a concrete trap with a concrete alternative for deadline performance stabilization.

The “rewrite everything” argument is compelling because it’s often technically correct. Legacy code is slow because it was written with different constraints, different scale expectations, and different architectural patterns. A modern rewrite would be faster, cleaner, and more maintainable. This is true.

But a rewrite takes months, introduces massive regression risk, and requires halting all other development. Slack’s reliability crisis provides a cautionary tale: when their system reached a tipping point of fragility, the instinct was to rebuild from scratch. What actually stabilized the system was stopping the line, halting feature development, and focusing a task force on fixing the deployment pipeline and stabilizing the existing architecture. They didn’t rewrite—they stabilized what existed.

Do this instead: Apply the Strangler Fig pattern during deadline performance stabilization. Wrap the slow legacy component in a fast caching or abstraction layer. Serve the majority of requests from the wrapper. Replace the legacy component incrementally over subsequent sprints without emergency pressure.

Translating Performance Wins into Business Metrics During Deadline Stabilization

This is the section that determines whether your deadline performance stabilization sprint is perceived as a success or a failure by the people who control your funding.

You can reduce your p95 API response time from 3.2 seconds to 450 milliseconds. You can eliminate 40% of your error budget. You can cut your cloud compute costs by 30%. If you report these numbers to your board as “p95 latency, error budget, and compute costs,” you will receive polite nods and confused questions.

Investors and executives don’t speak the language of latency percentiles. They speak the language of customer acquisition cost, monthly recurring revenue, churn rate, and burn rate. Your job after the technical work of deadline performance stabilization is done is translation.

The Economics of Latency: Why Deadline Performance Stabilization Matters in Dollars

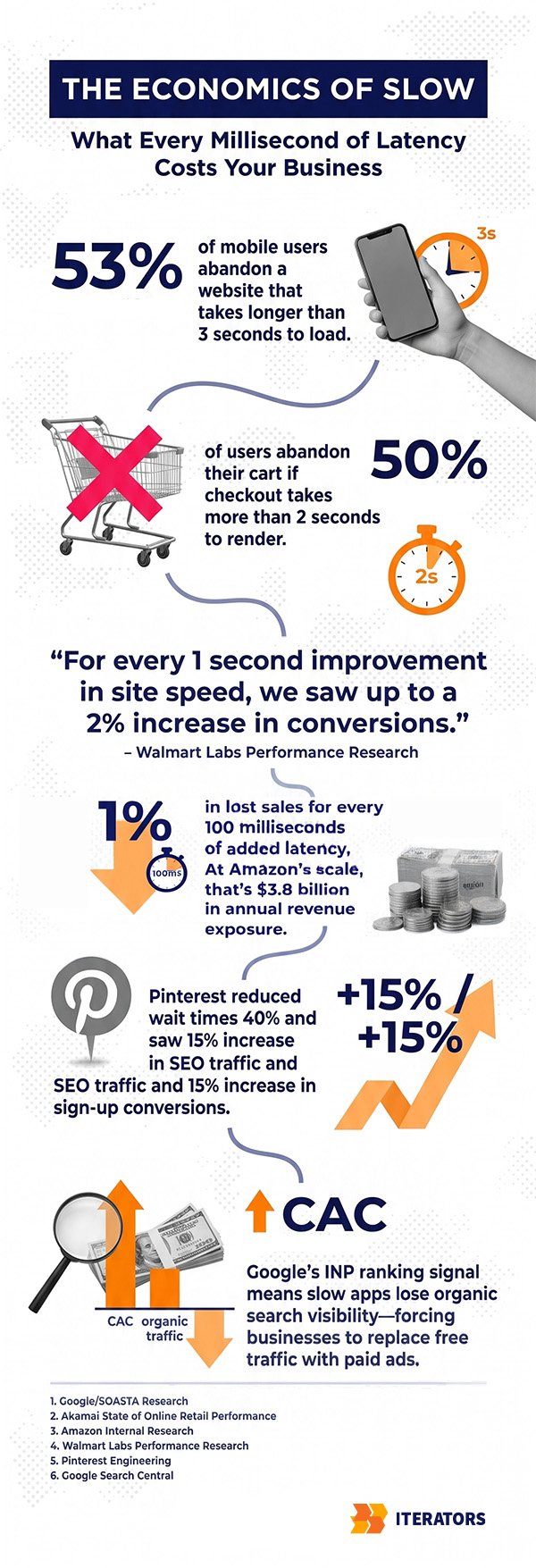

Amazon’s research established the foundational data point: every 100 milliseconds of added page load latency costs 1% of sales. In 2006, when this research was conducted, 1% of Amazon’s sales was approximately $107 million annually. Applied to Amazon’s 2024 retail revenue, the same 1% metric translates to roughly $3.8 billion in annual revenue exposure.

This number exists to give you leverage in a boardroom conversation about deadline performance stabilization. You’re not arguing about milliseconds. You’re arguing about percentages of revenue that are being left on the table because your application is slow.

The data compounds across the industry:

- 53% of mobile users will abandon a site that takes longer than 3 seconds to load

- 50% of users will abandon a shopping cart if the checkout page takes more than 2 seconds to render

- Pinterest reduced perceived wait times by 40% and saw a 15% increase in SEO traffic and a 15% increase in sign-up conversion rates

- Google’s integration of Interaction to Next Paint (INP) into its ranking algorithm means that poor application responsiveness directly degrades SEO rankings, forcing businesses to replace lost organic traffic with paid advertising

That last point is particularly important for investor conversations about deadline performance stabilization. Poor performance doesn’t just reduce conversion rates—it increases Customer Acquisition Cost by degrading organic search visibility. You’re paying more to acquire fewer customers because your application is slow.

The Stakeholder Translation Matrix for Performance Work

When you present the results of your deadline performance stabilization sprint, every technical metric needs a business translation. Here’s the framework:

Reduced page LCP from 4.2s to 1.1s → Implemented CDN caching for static assets → Lower bounce rate, higher organic search ranking → Reduced CAC: More organic traffic converts without additional ad spend

Reduced checkout API response from 3.2s to 450ms → Added Redis caching, fixed N+1 query → Higher cart completion rate → Protected MRR: Fewer abandoned transactions directly defends monthly revenue

Reduced server error rate from 2.3% to 0.1% → Fixed memory leak in payment microservice → Fewer dropped sessions, higher application reliability → Reduced Churn: Reliable software prevents frustration-driven cancellations

Reduced peak compute costs by 35% → Optimized database queries, reduced unnecessary API calls → Lower infrastructure overhead during peak traffic → Improved Gross Margin: Lower burn rate extends runway

The formula for every technical improvement during deadline performance stabilization is: Technical Change → User Experience Impact → Business Outcome → Financial Metric.

Never skip steps in this chain. “We reduced p95 latency by 2.75 seconds” skips everything after the first step. “We reduced checkout response time from 3.2 seconds to 450 milliseconds, which is projected to reduce cart abandonment by approximately 18% based on industry benchmarks, protecting an estimated $47,000 in monthly recurring revenue” is a complete sentence that an investor can evaluate.

For more on connecting technical metrics to business outcomes, Iterators’ metrics tracking guide provides a framework for building business-aligned measurement systems.

Demonstrating ROI on Deadline Performance Stabilization Investments

When you’re asking for resources—time, budget, or external expertise—to execute deadline performance stabilization, you need to frame the investment in terms of its return.

The calculation is simpler than it sounds:

- Identify the revenue or cost impact of the current performance problem (using the Amazon latency model or industry benchmarks)

- Estimate the cost of the stabilization work (engineering hours, potential external support)

- Calculate the payback period

Example: If your application’s slow checkout API is causing a 15% cart abandonment rate above industry baseline, and your monthly GMV is $500,000, you’re losing approximately $75,000 per month in transactions that would otherwise complete. A two-week deadline performance stabilization sprint costing $30,000 in engineering resources has a payback period of less than two weeks. That’s a straightforward investment decision.

The challenge is getting the data to make this calculation. If you don’t have conversion rate data segmented by page load time, you can use industry benchmarks as proxies. It’s not perfect, but it’s far more persuasive than “our API is slow and that’s probably bad.”

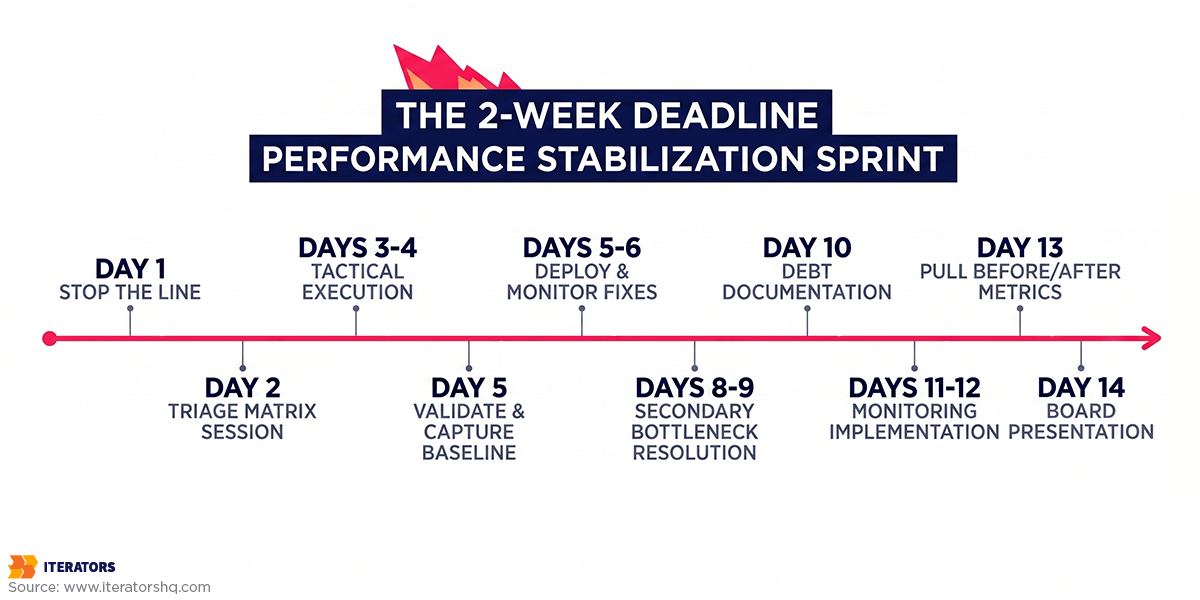

A Two-Week Timeline for Deadline Performance Stabilization

Theory is useful. A day-by-day playbook is more useful.

This is the two-week framework for executing deadline performance stabilization. It’s opinionated by design—under deadline pressure, optionality is the enemy of execution.

Week 1: Triage and Quick Wins for Performance Stabilization

Day 1: Stop the Line

This is the most important day of the deadline performance stabilization sprint. All feature development stops. The engineering leader calls the team together and establishes the emergency protocol. This is not a suggestion—it’s a mandate. Attempting to run a performance stabilization sprint while simultaneously shipping new features is like trying to bail out a sinking boat while someone else is drilling new holes.

The team runs basic profiling against real production traffic patterns. Not to build dashboards—to answer one question: what are the top three endpoints causing the most severe latency or the most frequent crashes? Use existing APM logs, database slow query reports, or a quick profiling session. You need enough data to start plotting the Performance Impact Matrix.

Day 2: The Triage Matrix Session

The engineering and product leads gather all identified bottlenecks and plot them on the Performance Impact Matrix. This session should take no more than two hours. The output is a prioritized list of 3-5 Quick Wins that the team commits to executing this week during deadline performance stabilization.

Anything that falls into Strategic Projects gets a documentation ticket and a scheduled sprint. Anything in Money Pits gets explicitly removed from consideration. If stakeholders push back on the deprioritization of Strategic Projects, this is the moment to have that conversation—with the matrix as your evidence.

Days 3-4: Tactical Execution of Performance Fixes

Engineers implement the prioritized Quick Wins. Common high-impact, low-effort fixes at this stage of deadline performance stabilization include:

- Adding missing database indexes on high-frequency queries (often reduces query time from seconds to milliseconds)

- Implementing Redis or Memcached caching on read-heavy endpoints that don’t require real-time data

- Deferring non-critical JavaScript to speed up initial page rendering

- Fixing obvious memory leaks in specific microservices

- Adding connection pooling to database connections that are being opened and closed on every request

- Implementing HTTP/2 if still on HTTP/1.1

- Moving synchronously-loading third-party scripts (analytics, marketing tools) to asynchronous loading

Each fix is implemented in isolation, tested, and documented. The documentation includes: what was changed, why the tactical approach was chosen, and the corresponding technical debt ticket for the proper long-term solution.

Day 5: Validation and Baseline Capture

The patches are deployed to a staging environment for rapid load testing. If stable, they’re pushed to production during a low-traffic window. Critically, you capture before-and-after metrics immediately post-deployment during deadline performance stabilization. These numbers are your evidence for the stakeholder conversation in Week 2.

Don’t wait until the end of the sprint to measure. Capture the delta immediately after each fix so you have clean, unambiguous data showing the impact of specific changes.

Week 2: Strategic Fortification and Stakeholder Communication

Days 8-9: Secondary Bottleneck Resolution

With the primary crashes and worst latency issues addressed, the team turns to the next tier of problems during deadline performance stabilization—typically performance issues that degrade over time rather than instantaneously. Memory leaks that accumulate over hours. Background worker queues that back up under sustained load. Database connection pools that exhaust under peak traffic.

These are often less dramatic than the Week 1 fixes but equally important for sustained stability. A system that performs well for the first hour and then degrades is not stable—it’s just slow to fail.

Day 10: Debt Documentation

Every tactical patch from Week 1 gets formally documented in the project management system. This is not optional during deadline performance stabilization. Each Quick Win fix should have a corresponding technical debt ticket that includes the specific refactoring steps, the estimated effort, and the priority for upcoming sprints.

This documentation serves two purposes: it ensures the debt gets addressed rather than accumulating silently, and it demonstrates to stakeholders that the team is managing the trade-offs responsibly rather than just hacking things together.

Days 11-12: Monitoring Implementation

With the system stabilized, the team can now safely invest time in improving observability after deadline performance stabilization. Set basic alerting thresholds on the critical paths you’ve just fixed—if response times creep back up, you want to know before users notice. Implement simple health checks on the components that were causing the most failures.

This is the right time to build monitoring infrastructure: after the fires are out, when you can instrument thoughtfully rather than reactively.

Days 13-14: The Executive Translation

Pull the before-and-after metrics. Apply the Stakeholder Translation Matrix. Convert technical improvements into financial projections. Prepare the presentation for the board or investors about your deadline performance stabilization results.

The narrative structure should be: here’s what was broken, here’s what we fixed, here’s the measurable improvement, here’s what that means for the business, and here’s the plan to prevent recurrence.

“We reduced checkout API response time from 3.2 seconds to 450 milliseconds. Based on industry benchmarks and our historical analytics, this improvement is projected to reduce cart abandonment by approximately 18%, protecting an estimated $47,000 in monthly recurring revenue. We’ve also reduced our peak compute costs by 35% through query optimization, saving approximately $15,000 per month in infrastructure costs. The remaining technical debt has been documented and scheduled for the next two sprint cycles.”

That’s a presentation that builds confidence, not confusion.

When to Bring External Expertise for Deadline Performance Stabilization

There are situations where internal teams cannot execute deadline performance stabilization effectively, regardless of skill level.

The most common is the bus factor problem: the engineers who understand the slow components have left the company, or the system was built by a contractor who is no longer available, or the architecture is so poorly documented that understanding what’s actually happening requires weeks of archaeology. Under deadline pressure, weeks of archaeology is time you don’t have.

The second situation is emotional investment. Teams that have built a system over years have deep attachments to architectural decisions that made sense at the time. Asking those same engineers to implement tactical patches that “violate” those decisions is genuinely difficult. External teams don’t have that baggage. They look at the system fresh, identify the bottlenecks without emotional context, and implement the pragmatic solution without the internal friction.

The third situation is specialization. Deadline performance stabilization for high-load distributed systems, real-time streaming infrastructure, or complex database architectures requires specific expertise that may not exist on your current team. Spending two weeks learning while simultaneously trying to stabilize a production system is a recipe for missed deadlines and introduced regressions.

Iterators’ development team extension services exist precisely for these deadline performance stabilization scenarios—bringing in senior engineers who can integrate with your team, execute the triage and stabilization work, and transfer knowledge back to your internal team once the crisis has passed.

The decision to bring in external expertise should be made on Day 1, not Day 10. If you recognize on Day 1 that your team lacks the specific skills or bandwidth to execute deadline performance stabilization, two weeks of external support is far more effective than ten days of struggling internally followed by a desperate call for help with four days left.

For organizations implementing comprehensive quality assurance processes alongside performance work, external expertise can accelerate both tracks simultaneously.

“The biggest mistake we see is teams spending the first week setting up perfect monitoring instead of fixing the three known issues that are killing 80% of their users. Measure what matters, fix what hurts, then optimize your measurement.” — Jacek Głodek, Founder @ Iterators

Understanding Stabilization vs. Optimization in Performance Work

These terms are often used interchangeably, but they describe fundamentally different activities with different success criteria.

Performance optimization is an ongoing, open-ended process of improving system performance toward an ideal state. It assumes adequate time, the ability to refactor architecture, and the luxury of pursuing improvements beyond the immediate user-visible threshold. Optimization asks: “How fast can we make this?”

Deadline performance stabilization is a time-bounded, business-critical intervention to bring a degraded system back to an acceptable baseline. It assumes severe time constraints, prioritizes business continuity over architectural elegance, and accepts tactical trade-offs that would be unacceptable in an optimization context. Stabilization asks: “What’s the minimum we need to fix to stop losing users and revenue in the next two weeks?”

The distinction matters because the strategies are different. Optimization can afford to be comprehensive. Deadline performance stabilization must be ruthlessly selective. Optimization can pursue the architecturally correct solution. Stabilization must often accept the pragmatic solution. Optimization measures success by how much better the system is than before. Stabilization measures success by whether the business milestone was achieved.

Understanding which mode you’re in determines every decision that follows. If you’re in deadline performance stabilization mode and you’re using optimization strategies—comprehensive refactoring, perfect monitoring infrastructure, complete test coverage—you will miss your deadline. If you’re in optimization mode and you’re using stabilization strategies—tactical patches, minimal testing, deferred debt—you’ll accumulate technical debt that eventually requires another emergency stabilization sprint.

The two-week deadline puts you unambiguously in deadline performance stabilization mode. Act accordingly.

For more on navigating the tension between simplicity and architectural correctness, Iterators’ engineering approaches guide covers the full decision framework.

Common Mistakes That Harm Deadline Performance Stabilization

A brief list of things that feel like progress but actively harm deadline performance stabilization efforts:

Horizontal scaling as a first response: Adding more servers is the expensive, slow, and often ineffective response to performance problems. Slack’s 2020 outage illustrates this perfectly: when their system started failing, they scaled up the web tier by 75%—the most servers they’d ever run. It didn’t help, because the problem was database lock contention, not insufficient web capacity. More workers waiting in line doesn’t fix a bottleneck; it just makes the queue longer. Horizontal scaling is appropriate after you’ve fixed the bottleneck and need to handle increased load. It’s not a substitute for fixing the bottleneck during deadline performance stabilization.

Deploying performance fixes without load testing: A fix that works correctly under normal traffic can introduce catastrophic failures under peak load. Before deploying any significant performance change to production during deadline performance stabilization, run a basic load test in staging. It doesn’t need to be comprehensive—just enough to verify that the fix doesn’t introduce new failure modes under realistic traffic conditions.

Fixing performance issues in isolation without measuring the system impact: Sometimes fixing one bottleneck reveals a second bottleneck that was hidden behind the first. This is expected and normal—it’s called the “shifting bottleneck” problem. After each major fix during deadline performance stabilization, re-profile the system to ensure you’re still working on the actual current bottleneck rather than the previous one.

Communicating only technical progress to stakeholders: If your weekly update to leadership about deadline performance stabilization is “we reduced p95 latency by 800ms and fixed three N+1 queries,” you’re creating anxiety rather than confidence. Stakeholders don’t know if that’s good progress or terrible progress. Every update should include the business translation: “We’ve addressed the primary cause of checkout timeouts. Based on our testing, this should reduce cart abandonment by approximately 15%. We’re on track to hit our performance targets by Friday.”

FAQ About Deadline Performance Stabilization

Q: How do I know which performance issues to fix first during deadline performance stabilization?

Use the Performance Impact Matrix. Profile your application against real production traffic to identify all significant bottlenecks, then plot them based on business impact (percentage of users affected, direct revenue impact, SLA risk) versus engineering effort (time to implement, blast radius, testing complexity). Focus exclusively on Quick Wins—high impact, low effort—during the first week of your deadline performance stabilization sprint. Everything else gets documented and scheduled for later.

Q: Should I rewrite slow code or add caching as a temporary fix?

Under deadline performance stabilization pressure, add caching as a temporary fix in almost every case. Rewrites take months, introduce massive functional risk, and guarantee missed deadlines. A well-implemented caching layer can stabilize a slow system in days and buy you the time to execute a proper refactoring without emergency pressure. Document the caching layer as technical debt and schedule the refactoring for the next sprint cycle.

Q: What performance metrics do investors actually care about during stabilization?

Investors care about financial metrics, not technical ones. You need to translate every technical improvement from deadline performance stabilization into its business equivalent: reduced Customer Acquisition Cost (from improved SEO rankings and conversion rates), protected Monthly Recurring Revenue (from reduced cart abandonment and fewer dropped sessions), lower churn rate (from improved reliability), and better gross margins (from reduced infrastructure costs). Never present raw technical metrics to the board without the business translation.

Q: How much performance improvement is “enough” to satisfy stakeholders?

Enough is defined by user expectations and industry benchmarks, not engineering perfection. The primary threshold for mobile applications is 3 seconds—53% of mobile users will abandon a site that takes longer than 3 seconds to load. For checkout flows, the threshold is 2 seconds. If your critical paths are above these thresholds, getting below them is the immediate goal for deadline performance stabilization. Beyond that, every 100ms of improvement has measurable revenue impact based on Amazon’s research. Present improvements in terms of crossing these thresholds, not in terms of raw millisecond deltas.

Q: When should I bring in external performance experts for deadline stabilization?

Bring in external experts when: your internal team lacks specific expertise in the failing components, key engineers who built the system are no longer available, your team is too emotionally invested in the existing architecture to implement pragmatic tactical fixes, or you simply don’t have the bandwidth to execute a focused deadline performance stabilization sprint while also keeping the lights on. The decision should be made on Day 1, not Day 10. Two weeks of external support is dramatically more effective than ten days of struggling followed by a desperate call for help with four days left.

Q: How do I prevent performance degradation after initial stabilization?

Pay down the technical debt from deadline performance stabilization systematically. Allocate a fixed percentage of future sprints—20-30% is a reasonable starting point—to refactoring the tactical patches implemented during the emergency. Implement basic performance monitoring with alerting thresholds on your critical paths so you’re notified of regressions before users notice them. Consider establishing a performance budget: explicit thresholds for key metrics that trigger an investigation if crossed. The goal is to shift from reactive firefighting to proactive monitoring, catching the next performance problem before it becomes the next emergency.

Conclusion: Surviving Your Next Deadline Performance Stabilization

Deadline performance stabilization is a discipline that demands the active suppression of your best engineering instincts.

Your instinct to build it right the first time is correct—in a six-month roadmap. Your instinct to understand the system completely before changing it is correct—when you have weeks to observe. Your instinct to fix the most interesting problem is correct—when you have the luxury of curiosity.

Under a two-week deadline, none of those instincts serve you. What serves you during deadline performance stabilization is a ruthless prioritization framework, the discipline to implement imperfect solutions quickly, the documentation habits that make those imperfect solutions manageable, and the communication skills to translate technical progress into the financial language that determines whether your company gets its next funding round.

The Performance Impact Matrix gives you the framework. The Stakeholder Translation Matrix gives you the language. The two-week playbook gives you the structure. What you bring is the discipline to follow them when every engineering instinct is pulling you toward the interesting problem, the perfect solution, and the comprehensive rewrite.

Fix the right things first during deadline performance stabilization. Document everything else. Translate your wins into business outcomes. That’s how you survive the next fourteen days.

Need expert help prioritizing and executing performance fixes under deadline pressure? Schedule a free consultation with us – Iterators specializing in rapid deadline performance stabilization for startups and scale-ups. We’ve stabilized production systems for companies hours before critical demos—and we know the difference between a Quick Win and a Money Pit.